Special cameras#

In addition to the simulation of the cameras belonging to the real drone, Parrot Sphinx offers extra types of cameras, which leverage the availability of the ground truth.

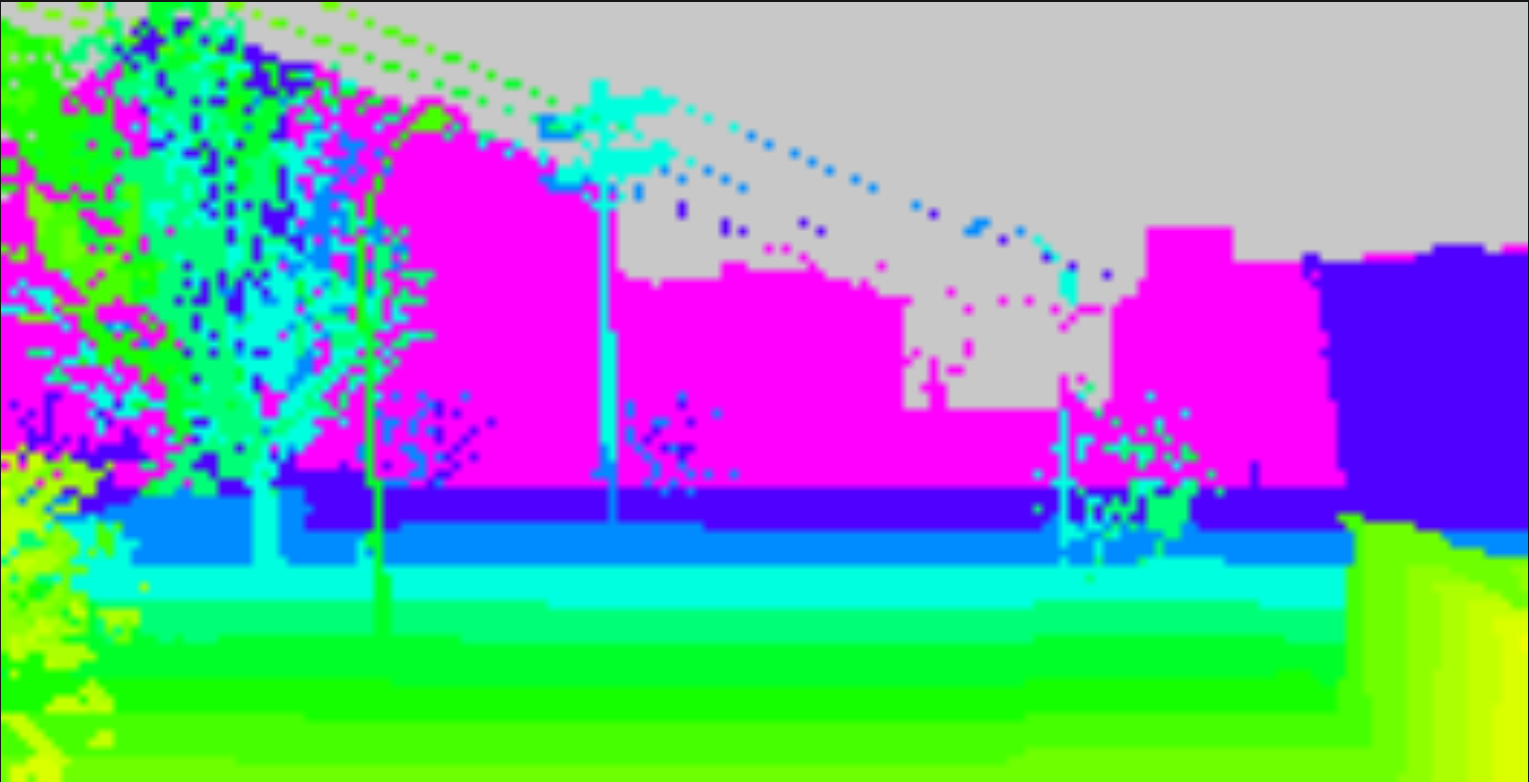

Depth, disparity, and distance cameras#

A depth camera with a colour gradient showing the distance to objects.#

The simulated ANAFI Ai comes with one depth camera, one disparity camera, and one distance camera, both being located at the same place as the right stereo camera.

They produce ideal depth, disparity, and distance values.

Note

Some special values for the gradient:

gray: infinity

black / dark gray: pixels occluded by the drone body

white: means invalid pixels

They are highly configurable (see HERE) so that you can adjust the quantification error over the computed value and add different kinds of noise. The distance camera can be configured as a wide-angle camera (see HERE), while depth and disparity cameras cannot.

Note that objects that use translucent materials are configured to be seen by these cameras.

If you created your own UE4 application, seeing translucent materials requires a bit of extra care as explained HERE.

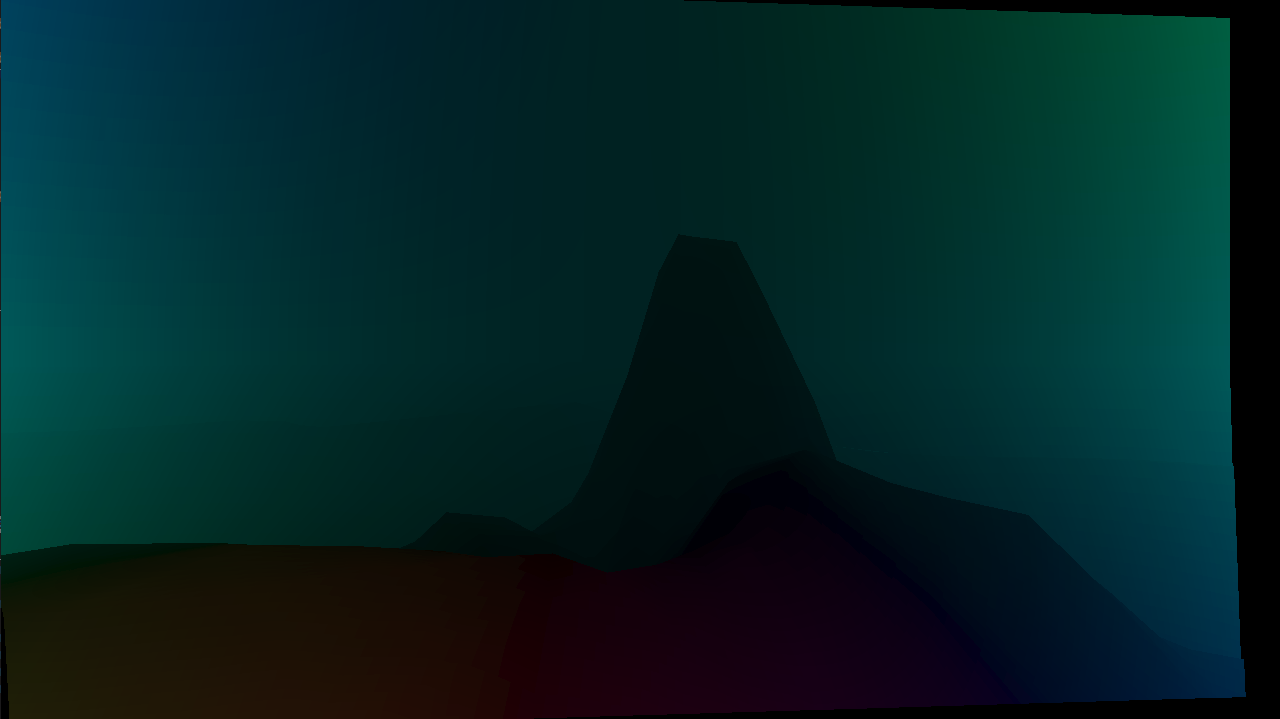

Optical flow camera#

This camera generates images that contain the motion [X, Y] of each pixel with respect to the previous frame. The motion is expressed in a decimal number of pixels of the image.

Description of related parameters can be found HERE.

Limitations:

The computed motion only takes into account the change of camera position in between two consecutive frames. Therefore animations of foliage and movements of actors (such as vehicles) are ignored.

While it is possible to mask pixel motion for pixels that were not in the field of view in the previous frame, pixels that were hidden by a foreground object cannot be masked.

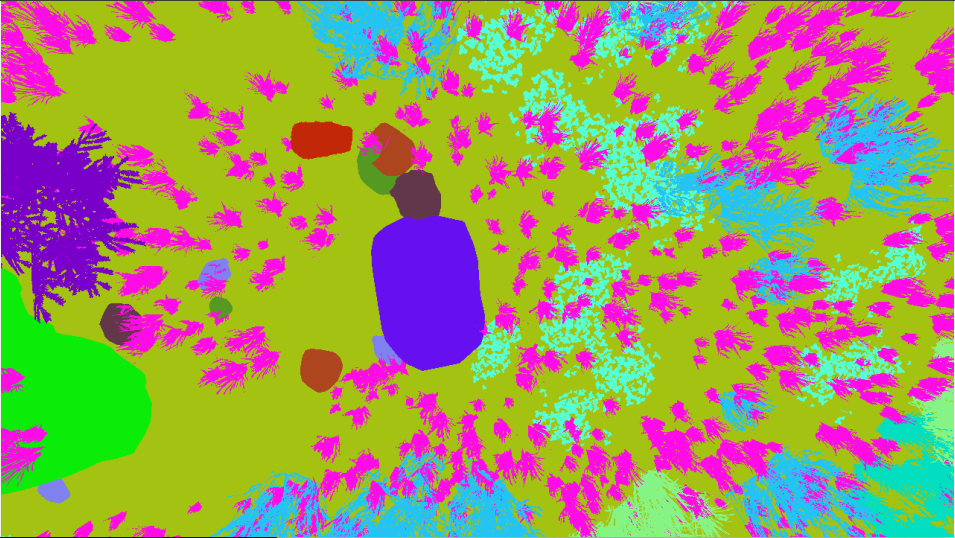

Segmentation camera#

Segmentation cameras generate frames that are partitioned into regions representing one object of the scene or one type of object. The color of each region is mapped to the related object or type.

These cameras are capable of working in wide-angle mode.

Much more details are presented in this dedicated section.